Context Engineering in Practice: Where Does Each Piece Go?

Anthropic, Martin Fowler, and Redmonk all named context engineering the #1 2026 skill shift. But almost every guide on the topic stops at the same place: write a CLAUDE.md, add a skill, sprinkle some MCP, ship it. None of them answer the question that actually blocks teams when they sit down to do this work.

Which piece of context belongs where?

A 2025 Qodo survey put the cost of getting that wrong on the table. 54% of developers who hand-pick context still report the AI misses relevance, and the rate is worst for seniors (52%, up from 41% for juniors) (Qodo, 2025). The pain isn’t lack of context. It’s misallocated context. The right rule sitting in the wrong file produces the same failure as no rule at all, with one bonus: the agent follows it confidently.

This post is the decision framework. Four surfaces, one matrix, token math, and the anti-patterns that break each surface. It’s drawn from a year-plus of running this stack on shipped tooling (Pylon, agent-skills, the LLM wiki, a local code intelligence MCP), so the rules are descriptive, not aspirational.

Key Takeaways

- Context engineering is allocation, not authoring. The hard part is deciding which surface each piece of context belongs in, not what to write.

- Four surfaces, four cost profiles: CLAUDE.md is always loaded, skills load on match, memory loads on demand, MCP is dynamic at query time.

- The cliffs are real. Past ~200 lines, CLAUDE.md starts hurting more than helping. Past 50 skills, only progressive disclosure keeps token cost sane: Microsoft’s research shows it cuts catalog cost by 94.7% (Microsoft Agent Skills, 2026).

- The single highest-leverage move: prune your CLAUDE.md down to what’s been load-bearing in the last 30 days. That alone correlates with ~40% fewer “bad suggestion” sessions (Redmonk, 2025).

Why did context engineering become the 2026 skill shift?

Models stopped being the bottleneck in 2026; context did. Anthropic’s engineering team notes that context exhibits n² pairwise token relationships, so longer context isn’t free, it’s quadratically more expensive in attention (Anthropic, 2025). The discipline shifted from “what to ask” to “what the model sees, and from where.”

You can see the rebrand cleanly in the literature. Anthropic’s piece introduces a vocabulary the industry adopted overnight: compaction, structured note-taking, just-in-time retrieval. Martin Fowler split prompts into two classes (instructions and guidance) and named the surfaces that carry them (Martin Fowler, 2026). The O’Reilly book “Agentic Coding with Claude Code” puts a chapter on context engineering as Chapter 1, before tools, before workflows, before anything else.

The data behind the shift is the part most posts skip.

Redmonk’s 2025 survey of agentic IDE users found that teams maintaining a CLAUDE.md report ~40% fewer “bad suggestion” sessions than teams without one (Redmonk, 2025). A Stanford and SambaNova paper on Agentic Context Engineering (ACE) found that incremental, structured context updates reduce drift and latency by up to 86% compared to unmanaged approaches (arXiv, 2025). And Chroma’s research on 18 frontier LLMs reached the punchline directly: “performance grows increasingly unreliable as input length grows” (Chroma Research, 2025).

Three independent groups, three completely different methodologies, one finding. The model isn’t the variable that moves outcomes anymore. The context is.

What is context engineering, and how is it different from prompt engineering?

Prompt engineering is “what’s in this single message.” Context engineering is “what the agent sees across an entire session, from every surface.” The difference is durable: prompts are ephemeral; context surfaces persist, compose, and have token budgets that compete with each other. The leverage moved from generation quality to context quality, which matters more now that 41% of all code shipped is AI-generated or AI-assisted (Index.dev, 2026).

A two-line analogy helps. Prompt engineering is the sentence the agent reads when you talk to it. Context engineering is the syllabus the agent has been reading all semester. The model is a reader. The prompt is the question. Context is the reading list, and the reading list is what decides whether the answer lands.

That distinction shows up immediately in token math. Bytes spent on a stale CLAUDE.md rule are bytes you can’t spend on the user’s question. A bloated skill catalog crowds out the working memory the agent needs to actually solve the problem. The discipline is no longer “write better prompts.” It’s “decide what the model sees, when it sees it, and from where it gets fetched.”

Where should each piece of context live?

Four surfaces matter, and each has a different cost profile. CLAUDE.md is always loaded. Skills load on match. Memory loads on demand. MCP is dynamic at query time. Microsoft’s progressive-disclosure research makes the cost difference concrete. Naive loading of a 50-skill catalog burns 150,000 tokens. Metadata-first loading uses 8,000 tokens, a 94.7% saving (Microsoft Agent Skills, 2026). Picking the right surface isn’t a stylistic call. It’s an order-of-magnitude budget decision.

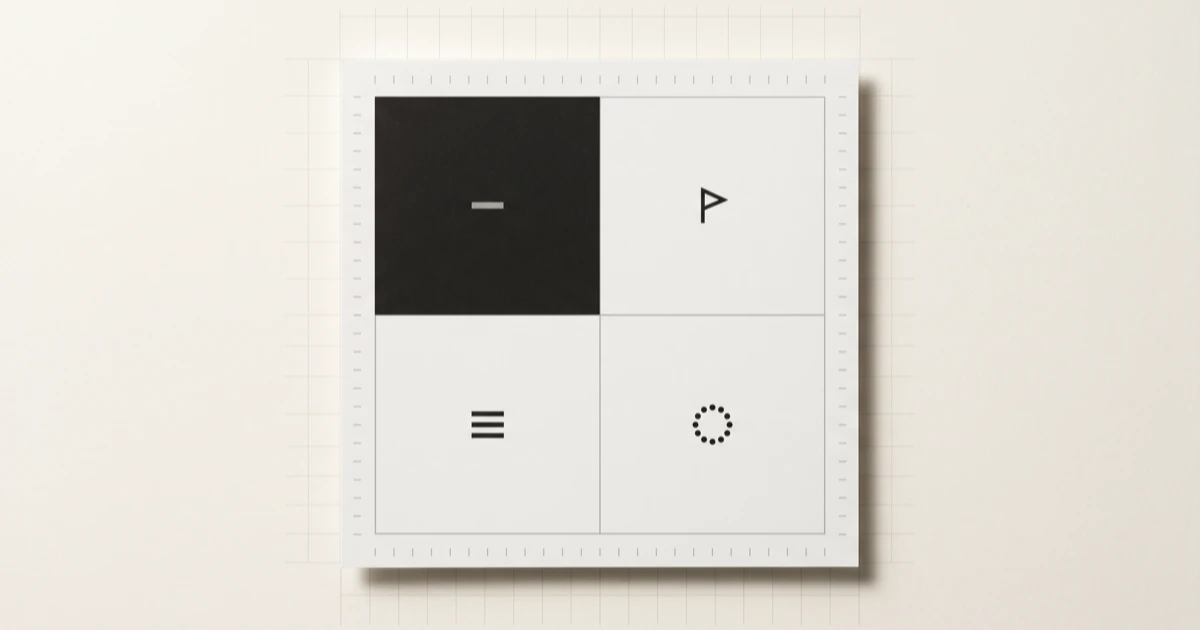

Most teams I’ve watched build this stack pick the surface by accident: whichever file they happened to open first. The cleaner heuristic is to ask two questions about the piece of context in front of you. Is it stable, or does it evolve? Is it always relevant, or only sometimes? The four answers map to the four surfaces.

| Always relevant | Sometimes relevant | |

|---|---|---|

| Stable | CLAUDE.md (always loaded). Example: “use Bun, not Node.” | Skill (loads when triggered). Example: “how we write a migration.” |

| Evolves | Auto memory (distilled, reread on need). Example: “this user wants terse output.” | MCP (dynamic, query-time). Example: “call hierarchy of handleAuth.” |

The matrix isn’t a style guide. It’s a forcing function. Picking the cell forces a single question: is this load-bearing every session, or load-bearing on a trigger? That’s the question most teams skip. The result is the pattern you see everywhere: CLAUDE.md files full of rules nobody reads, skill catalogs full of skills nobody triggers.

The most common mistake is putting an “evolves and always relevant” rule in CLAUDE.md. It rots within weeks, the agent follows the stale version confidently, and nobody notices until production breaks. That class of rule belongs in auto memory, where it can be rewritten the next time it’s wrong without bloating the always-loaded surface.

How tight should CLAUDE.md actually be?

A useful CLAUDE.md is under ~200 lines. Past that, signal drops fast. Chroma’s research on 18 frontier LLMs found that performance grows increasingly unreliable as input length grows (Chroma Research, 2025). Bigger CLAUDE.md isn’t safer; it’s noisier, and the agent confidently follows the loudest stale rule.

A good CLAUDE.md covers four things and nothing else.

Quick-start commands, so the agent never guesses how to run your tests, build your code, or start a dev server. Key conventions, like which UI library belongs in which package, which logger you use, or how you structure error handling. Code search priorities, so the agent reaches for structural tools before grep where available. The skills catalog index, so the agent knows what specialized workflows exist before it reinvents one.

What the file is not: a dump of every preference you’ve ever had. Past a couple hundred lines, CLAUDE.md starts crowding the agent’s context window and drowning out the signals that matter.

Our finding: Across the projects I run this stack on, the CLAUDE.md files that earn their place all sit between 120 and 200 lines, around 1,800 to 2,800 tokens. The one that crept past 400 lines visibly degraded agent output. The agent kept latching onto a stale rule about a refactor that had already shipped. Pruning back under 200 fixed it the same day.

The cliff isn’t theoretical. It’s where the always-loaded budget starts competing with the user’s question for attention.

The audit cadence matters as much as the size. CLAUDE.md rots the same way any documentation rots. A rule that was load-bearing six months ago might describe an API you’ve since replaced. Review the file after any significant refactor, when an AI session confidently does the wrong thing, and whenever it crosses 200 lines. For more on the broader stack this file sits inside, the layered scaffolding around the model covers each tier in depth.

When does a piece of context belong in a skill, not a prompt?

A piece of context belongs in a skill when it’s a discipline you want enforced and it has a clear trigger. Skills implement progressive disclosure: descriptions sit in context, full bodies load only on invocation. That’s how a 50-skill catalog uses 8,000 tokens of metadata instead of 150,000 tokens of full bodies, a 94.7% saving (Microsoft Agent Skills, 2026).

Two flavors of skill earn their place. Process skills enforce discipline: brainstorming before building, systematic debugging before fixing, verification before claiming “done,” test-driven development before writing implementation code. Implementation skills encode codebase-specific workflows: how your ORM expects migrations, how your feature flags are wired, how your release pipeline actually runs.

The discipline ones matter more than the implementation ones. Implementation can always be done a different way. Discipline is what stops the agent from band-aiding its way through a subtle bug for five rounds in a row.

The piece most people miss is that the skill description is the only part the agent always sees. The body is the doctrine; the description is the discoverable index entry. If the description is vague, the agent never finds the skill. If it’s hyper-specific, you end up writing a separate skill for every variation. Get the description right and the rest of the catalog scales.

Before you write your own skills, install what’s already out there. Superpowers ships a catalog of discipline skills (brainstorming, systematic debugging, verification, TDD, plan execution) that covers most of what any team uses day-to-day. The agent-skills pack I maintain encodes a different layer: ways of thinking about structure (complexity accounting, module-secret auditing, seam finding) distilled from foundational software engineering texts. Don’t rebuild generic discipline. Install it. Reserve your authoring effort for the workflows your codebase actually invented.

What’s the right place for memory and MCP?

Memory captures lessons that should survive sessions. MCP captures live, expensive-to-recompute context that should never sit in a prompt. MCP crossed 10,000 servers and 97 million monthly downloads in its first year (Pento.ai, 2025), so the dynamic-retrieval surface is now standard infrastructure, not a niche.

Three memory types do different work and shouldn’t be confused.

Project memory lives in files (bugs.md, decisions.md, key_facts.md, issues.md) and gets read on demand. Auto memory is a curated MEMORY.md of distilled rules, one or two lines each, always loaded. Episodic memory is the full searchable history of past conversations, queried when the agent needs the reasoning behind a distilled rule. The same idea underpins Andrej Karpathy’s LLM wiki pattern: synthesize on write, not on read. Each insight stays available to the next session, never rediscovered from scratch.

A useful side effect of naming the surface is the promotion path. A CLAUDE.md rule that turns out to be conditional should get demoted into a skill. A debugging insight that turns out to be repeatable should get promoted into auto memory. A one-off observation that won’t recur should get logged in episodic memory and forgotten. The matrix tells you the destination.

MCP is the runtime layer. It carries context the agent can’t pre-load because it changes between turns: the current call hierarchy of a function, the references to a symbol after a recent commit, the blast radius of a rename. I’ve written about why local code intelligence matters in depth. The short version: text search can’t see structure, and MCP is the protocol that finally lets the agent ask the right kind of question. Pre-loading that data is a category error. Fetching it on call is the right shape.

What does wrong context engineering look like?

Misallocation, not absence, is the failure mode. The Opsera AI Coding Impact Benchmark Report analyzed 250,000+ developers across 60+ enterprise organizations. Without governance, AI-generated PRs wait 4.6x longer in review, and AI-generated code introduces 15 to 18% more security vulnerabilities as autonomy expands (Opsera, 2026). Context engineering is the cheapest governance layer most teams skip, and the failure modes are predictable. The applied case for the same allocation discipline at the merge gate is AI as the first line of PR review: same surfaces, same trade-offs, different use site.

The 600-line CLAUDE.md. The agent follows the loudest rule, not the most relevant one. Symptom: confidently wrong patches that cite a stale convention. Right home: prune to the last-30-day load-bearing rules; promote conditional rules into skills; demote evolving facts into auto memory.

The skill that’s a paragraph in a file. The skill never triggers because the description is too vague, or it triggers on everything because the description is too generic. Symptom: skill exists, never fires, agent reinvents the workflow inline. Right home: rewrite as a CLAUDE.md rule if it’s truly always relevant, or rewrite the description to make the trigger crisp.

Memory used as a TODO list. MEMORY.md balloons to 500 lines of “remember to” entries. Symptom: the always-loaded budget gets crowded out by aspirations the agent can’t act on anyway. Right home: distilled lessons only, one or two lines each. TODOs belong in your task tracker, not in the agent’s working context.

MCP queries that return everything. The tool returns 50 results because the schema is sloppy or the ranking is naive. Symptom: token cost spikes per query, useful results buried in noise. Right home: tighter tool schemas; structural ranking instead of raw search; structural signals that prioritize the public API surface over test files.

No measurement loop. Nobody audits which CLAUDE.md rules still earn their place. Symptom: the file rots, the agent’s output rots with it, and nobody can name the moment quality slipped. Right home: a 30-day audit cadence baked into the team’s process. Run it on a calendar, not on vibes.

The class of failure all five share is the same: the right rule, in the wrong cell. Naming the cell turns context engineering from “writing files” into “allocating budget.”

Frequently Asked Questions

What’s the difference between prompt engineering and context engineering?

Prompts are per-message; context engineering is the entire surface area the agent sees over a session. Anthropic frames the discipline around just-in-time retrieval and structured note-taking, not “write better instructions” (Anthropic, 2025). The leverage moved upstream of any single message.

How big can my CLAUDE.md be before it hurts me?

Past ~200 lines or roughly 3,000 tokens, signal drops fast. Chroma’s 2025 research on 18 frontier LLMs found that performance grows increasingly unreliable as input length grows (Chroma Research, 2025). The cliff is real, and bigger isn’t safer.

When should I write my own skill instead of installing one?

Install community packs (Superpowers, agent-skills) for generic discipline (debugging, brainstorming, verification, plan execution). Write your own only for codebase-unique workflows: how your ORM expects migrations, how your release pipeline runs, how your feature flags are wired. Don’t rebuild generic discipline.

Does context engineering cost more tokens?

Counter-intuitively, no. Microsoft’s progressive-disclosure benchmark shows ~94.7% context savings on a 50-skill catalog versus naive loading (Microsoft Agent Skills, 2026). The cost is in design, not tokens. Allocate by surface and you spend fewer tokens for better results.

What’s the single highest-leverage move I can make today?

Open CLAUDE.md, count its lines, and prune anything that hasn’t been load-bearing in the last 30 days. That single audit correlates with the ~40% reduction in “bad suggestion” sessions Redmonk reported in 2025 (Redmonk, 2025), and it costs about 30 minutes.

The Real Argument

Context engineering is allocation, not authoring. Once you accept that, most of the discipline collapses into a single question, asked of every rule: which surface earns this? CLAUDE.md when it’s stable and always relevant. Skill when it’s stable and triggered. Auto memory when it evolves and stays load-bearing. MCP when it evolves and has to be fetched fresh. Tighter than 200 lines on the always-loaded surface. Progressive disclosure on everything else.

If you take one thing from this post, take this: the highest-leverage move isn’t writing more rules. It’s pruning the ones already there to the cell they actually belong in. The agent’s output doesn’t get better when you give it more context. It gets better when you give it the right context, in the right place, at the right time. The model is the same in both cases. The allocation is everything.

If it was useful, pass it along.